Faster response to user

It is known that providing faster response to users greatly improve their experience, and many web engineers work hard on optimizing the time spent until user starts seeing the contents. The theoretically easiest way to optimize the speed (without reducing the size of the data being transmitted) is to transfer essential contents first, before transmitting other data such as images.

With the finalization of HTTP/2, such approach has become practical thanks to it's dependency-based prioritization features. However, not all web browsers (and web servers) optimally prioritize the requests. The sad fact is that some of them do not prioritize the requests at all which actually leads to worse performance that HTTP/1.1 in some cases.

Since the release of version 1.2.0, we have conducted benchmark tests that measure first-paint time (time spent until the web browser starts rendering the new webpage), and have added a tuning parameter that can be turned on to optimize the first-paint time of web browsers that do not leverage the dependency-based prioritization, while not disturbing those that implement sophisticated prioritization logic.

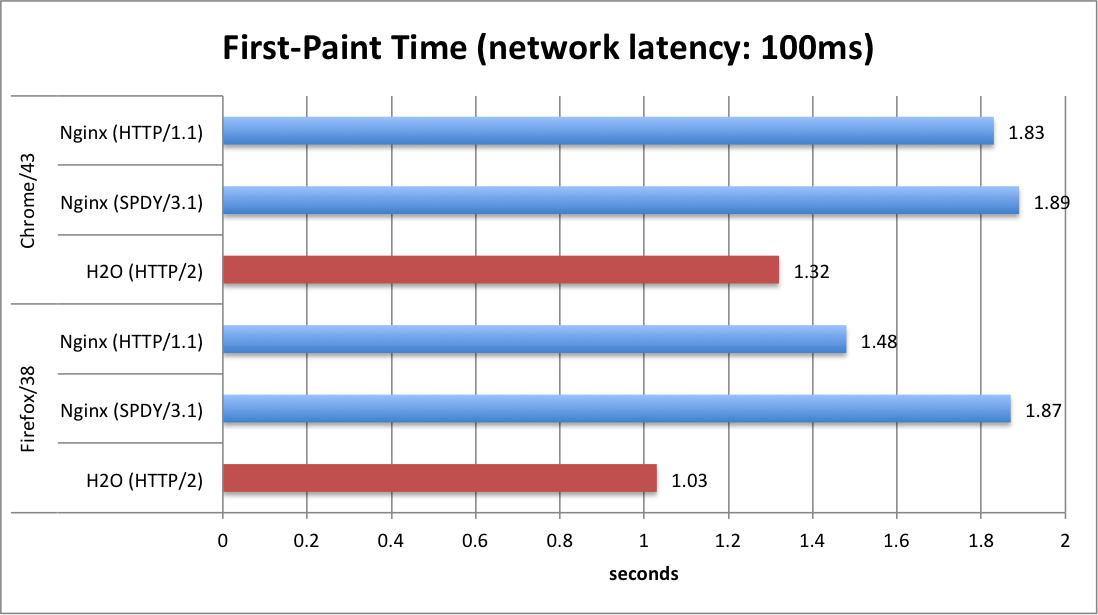

The chart below shows the first-paint time measured using a virtual network with 100ms latency (typical for 4G mobile networks), rendering a web page containing jquery, CSS and multiple image files.

It is evident that the prioritization logic implemented in H2O and the web browsers together offer a huge reduction in first-paint time. As the developer of H2O, we believe that the prioritization logic implemented in H2O to be the best of class (if not the best among all), not only implementing the specification correctly but also for having practical tweaks to optimize against the existing web browsers.

In other words, web-site administrators can provide better (or the best) user-experience to the users by switching their web server to H2O. For more information regarding the topic, please read HTTP/2 (and H2O) improves user experience over HTTP/1.1 or SPDY.

Version 1.3.0 also supports TCP fast open, an extension to TCP/IP that reduces the time required for establishing a new connection. The extension is already implemented in Linux (and Android), and is also expected to be included in iOS 9. As of H2O version 1.3.0 the feature is turned on by default to provide even quicker user experience. Kudos go to Tatsuhiko Kubo for implementing the feature.

FastCGI support

Since the initial release of H2O many users have asked for the feature; it is finally available! And we are also proud that it is easy to use.

First, it can be configured either at path-level or extension-level. The latter means that for example you can simply map

.php files to the FastCGI handler without writing regular expressions to extract PATH_INFO.The second is the ability to launch FastCGI process manager under the control of H2O. You do not need to spawn an external FastCGI server and maintain it separately.

Using these features, for example Wordpress can be set-up just by writing few lines of configuration.

paths:

"/":

# serve static files if found

file.dir: /path/to/doc-root

# if not found, internally redirect to /index.php/...

redirect:

url: /index.php/

internal: YES

status: 307

# handle PHP scripts using php-cgi (FastCGI mode)

file.custom-handler:

extension: .php

fastcgi.spawn: "PHP_FCGI_CHILDREN=10 exec /usr/bin/php-cgi"

Of course it is possible to configure H2O to connect to FastCGI applications externally using TCP/IP or unix sockets.Support for range-requests

Support for range-requests (HTTP requests that request a portion of a file) is essential for serving audio/video files. Thanks to Justin Zhu it is now supported by H2O.

Conclusion

All in all, H2O has become a much better product in version 1.3 by improving end-user experience and by adding new features.

We plan to continue improving the product. Stay tuned!

Good publish you have performed the following. I am really very happy you just read this kind of. That is a quite useful subject matter you are preferred. keep writing.Online Sustomer Support

ReplyDeleteHi, Here i am portraying you least complex approach to send SMS utilizing ASP.NET and API from SMS sender suppliers.woocommerce sms order status

ReplyDeleteIndy 500 live

ReplyDeletehttp://watchindy500live.xyz/

http://indy-500-live.xyz/

http://indianapolis500-live.xyz/

http://cocacola600live.xyz/

http://stateoforigin2016.com/

http://nswvsqldlive.xyz/

http://queenslandvsnswlive.xyz/

http://watchindy500live.xyz/

http://indy-500-live.xyz/

http://indianapolis500-live.xyz/

http://cocacola600live.xyz/

http://stateoforigin2016.com/

http://nswvsqldlive.xyz/

http://queenslandvsnswlive.xyz/

Indy 500 live

ReplyDeletehttp://watchindy500live.xyz/

http://indy-500-live.xyz/

http://indianapolis500-live.xyz/

http://cocacola600live.xyz/

http://stateoforigin2016.com/

http://nswvsqldlive.xyz/

http://queenslandvsnswlive.xyz/

http://watchindy500live.xyz/

http://indy-500-live.xyz/

http://indianapolis500-live.xyz/

http://cocacola600live.xyz/

http://stateoforigin2016.com/

http://nswvsqldlive.xyz/

http://queenslandvsnswlive.xyz/

The war between humans, orcs and elves continues. Lead your race through a series of epic battles, using your crossbow to fend off foes and sending out units to destroy castles. Researching and upgrading wisely will be crucial to your success! There are 5 ages total and each one will bring you new units to train to fight in the war for you cause.

ReplyDeleteearn to die 2 | game earn to die

| unfair mario | slitherio

Whatever you do, don’t neglect your home base because you cannot repair it and once it is destroyed, you lose! Age of War is the first game of the series and really sets the tone for the Age of War games . Also try out the Age of Defense series as it is pretty similar.

In this game, you start at the cavern men’s age, then evolve! There is a total of 5 ages, each with its units and turrets. Take control of 16 different units and 15 different turrets to defend your base and destroy your enemy.

tank trouble | age of war | age of war 6 | gold miner

The goal of the game also differs depending on the level. In most levels the goal is to reach a finish line or to collect tokens. Many levels feature alternate or nonexistent goals for the player.

The game controls are shown just under . Movement mechanisms primarily include acceleration and tilting controls.

It consists of a total of 17 levels and the challenge you face in each level increases as you go up. The game basically has a red ball that has to be moved across the various obstacles in its path to the goal.

In order to gain the highest tanh trouble | tank trouble 2 scores you should try to avoid the difficulties, be smart and quick. The game offers you tank death matches where you should show off your concentration and accurate shots the only way towards success . If your performance will be good, the game will reward you. Some bonuses will appear during the game play of tank trouble unfair mario

unfair mario 2 | tank trouble 3

http://usopen2016live.xyz/

ReplyDeletehttp://belmontstakeslive.xyz/

http://allblacksvswales.com/

http://allblacksvswaleslive.xyz/

http://www.argentinavsitalylive.xyz/

http://australiavsenglandlive.xyz/

http://newzealandvswaleslive.xyz/

http://southafricavsirelandlive.xyz/

http://stateoforigin2016.com/

http://usopenchampionshiplive.xyz/

http://allblacksvswales.com/

ReplyDeletehttp://allblacksvswaleslive.xyz/

http://stateoforigin2016.com/

http://sportsbuliten.com/blacks-vs-wales-live/

Great! Thanks for sharing the information. That is very helpful for increasing my knowledge in this fiel

ReplyDeleteRed Ball | | duck life | Slitherio

Red Ball 2 | Red Ball 3 | Red Ball 4

Gmail sign in | gmail login |

birkenstocks

ReplyDeletepandora charms

coach outlet store online

uggs outlet

oakley sunglasses cheap

louis vuitton outlet online

oakley sunglasses outlet

michael kors handbags outlet

michael kors outlet canada

michael kors outlet online

zhi20161216

niche adel

ReplyDeleteWhat year was camp founded? HINT: It's one year less than 1920 *

ReplyDelete

ReplyDeleteThe blog or and best that is extremely useful to keep I can share the ideas

of the future as this is really what I was looking for, I am very comfortable and pleased to come here. Thank you very much.

tanki online | 2048 game | tanki online game

ReplyDeleteThanks for the awesome share

Hi we at Colan Infotech Private Limited , a company which is Situated in US and India, will provide you best service and our talented team will assure you best result and we are familiar with international markets, We work with customers in a wide variety of sectors. Our talented team can handle all the aspects of custom application development, we are the best among the dot net development companies in Chennai asp .net web development company

We have quite an extensive experience working with asp .net development services. we are the only asp.net web development company which offer custom services to a wide range of industries by exceeding our client’s expectations. You can even interact directly with the team regarding your project, just as you would with your in-house team, to achieve your dream product.Custom application development company, asp.net development companies,Hire asp .net programmers,asp.net web development services,dot net development companies in chennai Hire asp .net programmers. Here is a good resource if anyone in need of asp.net web development services

dot net development companies in chennai

kami menyediakan Obat Penggugur Kandungan yang terbukti ampuh untuk menggugurkan kandungan, bila mana anda belum menghendaki anak yang akan lahir kelak.

ReplyDeleteHi

ReplyDeleteI read your post.this article was very effective and helpful to us. thanks for sharing this amazing article. I am resently

posted at prince zamira,leather Belt is most important accessories of a man.I think always buckle belt,

watch and tie matching excellent.Everybody should try to follow this.

You could likewise use it on your laptop, Mobdro PC as well as wise TV as well. This application official source also includes a costs variation also. This variation is completely Mobdro App Download advertisement.

ReplyDelete3. Baja Perkakas (Tool Steel)Agen plat besi hitam

ReplyDeleteAgen plat besi hitam

undangan pernikahan akrilik

Agen plat kapal besi baja

Agen besi beton Sni Ulir Polos

undangan pernikahan bentuk dompet

thank for posting

ReplyDeleteSenhor Deputado Cashman, agradeço-lhe a informação. friv.com.ec friv360.com.br friv4school2020.com A todos que partilham e trabalham sob estas mesmas convicções e princípios jeuxdefriv2018.net juegosderoblox.com juegosdezoxy.com Mais uma vez, obrigada ao Parlamento por comungar da visão que informa a nova política dos consumidores juegoskizi2017.net juegosyepi2017.com twizl3.com zoxy2.org assente no mercado - a visão de um mercado de consumidores informados e capacitados que procuram e usufruem, com confiança,

Hai,

ReplyDeleteCheck out our now venture called social media marketing chittoor

oke

ReplyDeleteIt is a very nice article including a lot of viral content. I am going to share it on social media. Get the Cold Pressed Oil in online.

ReplyDelete

ReplyDeleteThis blog is a great source of information which is very useful for me.

jual obat aborsi

jual obat aborsi palembang

jual obat aborsi malang

jual obat aborsi makassar

jual obat aborsi bekasi

jual obat aborsi pekanbaru

jual obat aborsi bandung

-Can be very slow but shows all backlinks along with their PR, Anchor and if it's a Nofollow

In this post,learn how to watch Periscope on PC Windows 10/8/7

ReplyDeleteIt is a very nice article including a lot of viral content. I am going to share it on social media. Get the buy gingelly oil online.

ReplyDelete“JANGAN SALAH PILIH BELI OBAT ABORSI DALAM BENTUK KEMASAN PLASTIK KLIP”, Situs https://obatterlambatbulan.com

ReplyDeleteWe at that point built up a one of a kind arrangement of loaning solely by means of the Internet and Fax which has turned out to be the most savvy, productive and speediest method for getting payday credits to date. If also need any tips and helpful information to visit her payday loan san diego

ReplyDelete

ReplyDeletePeople today crafting will be great in combination with useful strongly related to industry experts may get. A good number of fresh new realities in combination with points That I I'm nonetheless very read about before. You written text may be wholly poor quality and informative based upon possibilities potential traders page views. Numerous ground-breaking issues and knowledge we certainly have under no circumstances found before. Presently I highly recommend you please click here stubhub feesContinue to keep dispersion excess subject material.

Good post thanks for sharing

ReplyDeletephp training course in chennai

ReplyDeleteThank you for the information from the article, hopefully it will be useful for us all, the more successful it will be

Titan Gel Asli

Parfum Perangsang Wanita

Obat Kuat Viagra Asli

Titan Gel Gold

Harga Titan Gel

Efek samping Titan Gel

Obat Vmenplus asli

Perbedaan Titan Gel hitam Dan Titan Gold

ReplyDeleteBest post thanks for sharing

Social Media Marketing Chennai

Thank you

ReplyDeletejual atap bitumen onduline onduvilla

jual kalsiboard untuk dinding rumah kamar

jual turbin ventilator rumah di surabaya harga murah

Hi,

ReplyDeleteThis System is the speediest, simplest and most pleasant approach to rapidly get the body you want and merit. The Flat Belly Fix Review And thank you sharing with us.